Thanks blueferret! No worries at all! I was thinking of adding the watch model as a link on my site for anyone to download - I will try and drop it on in the near future.

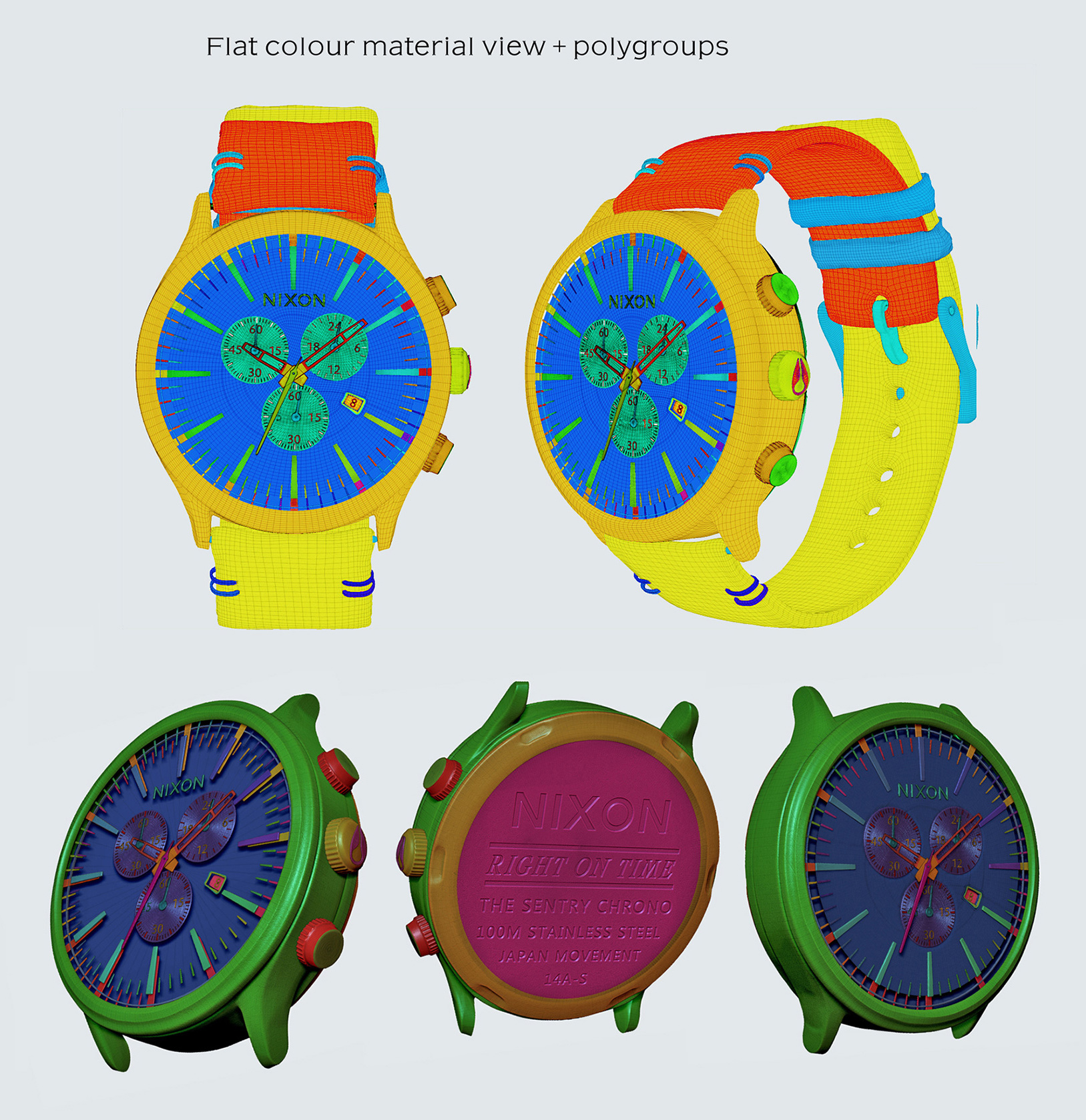

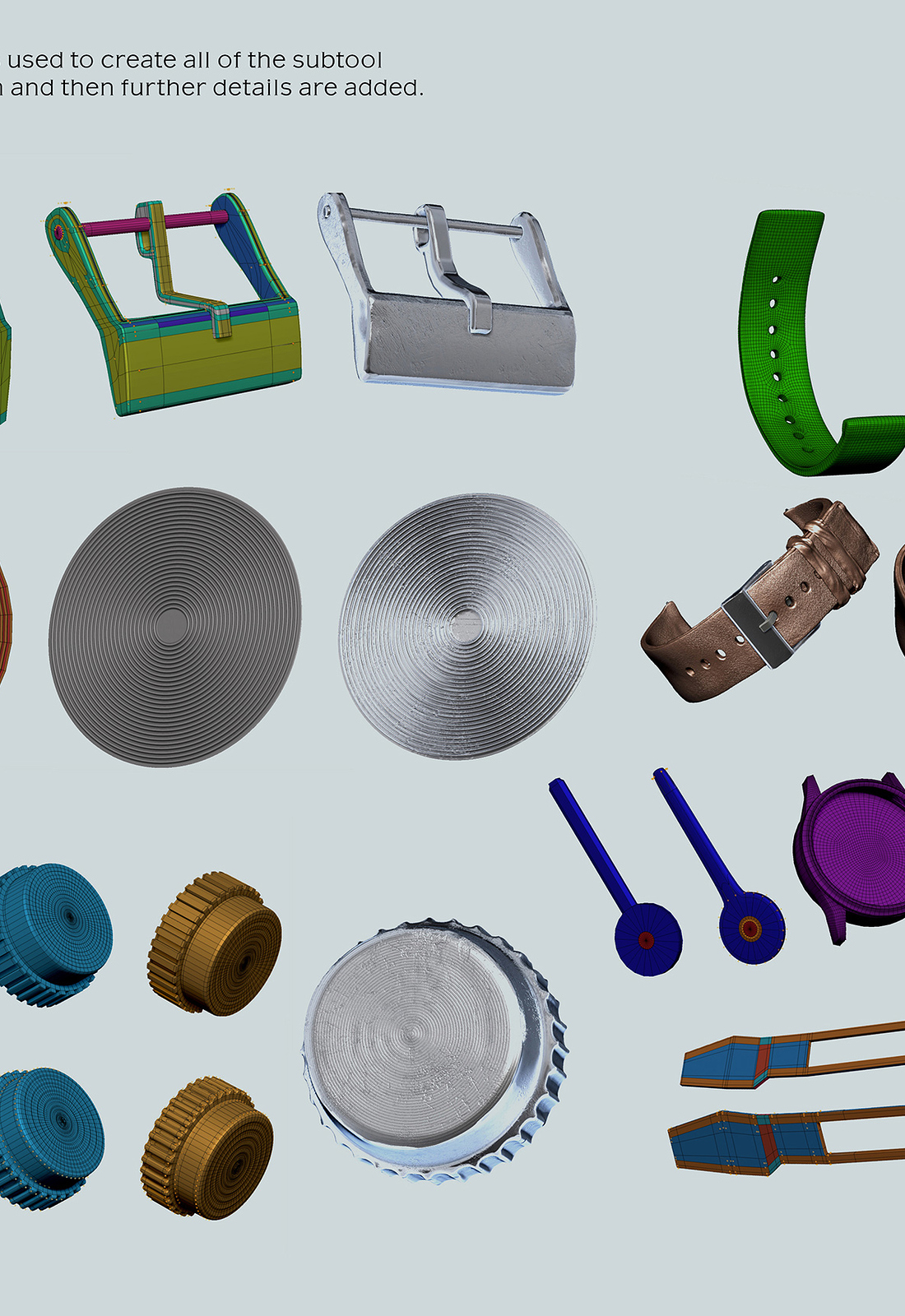

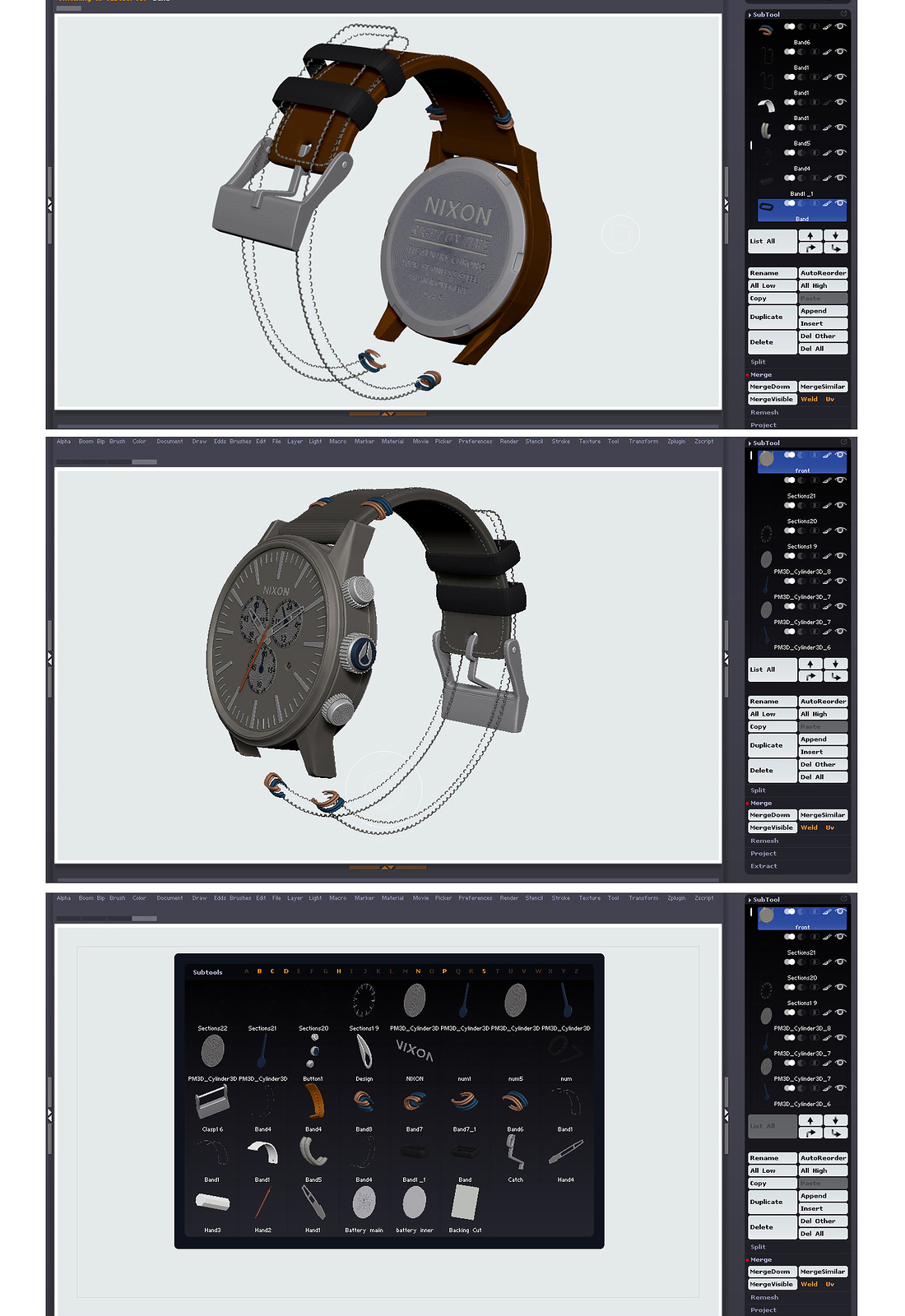

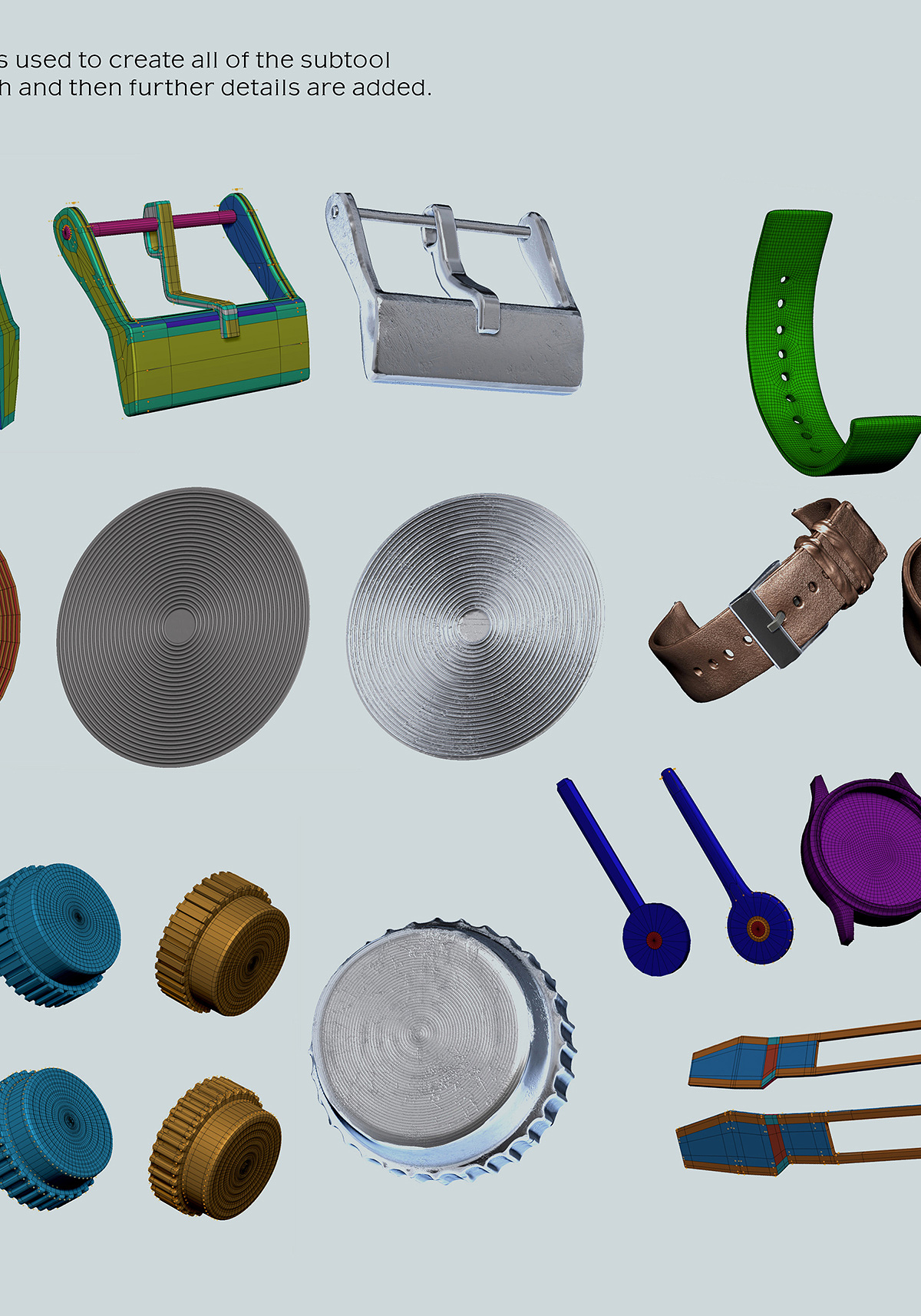

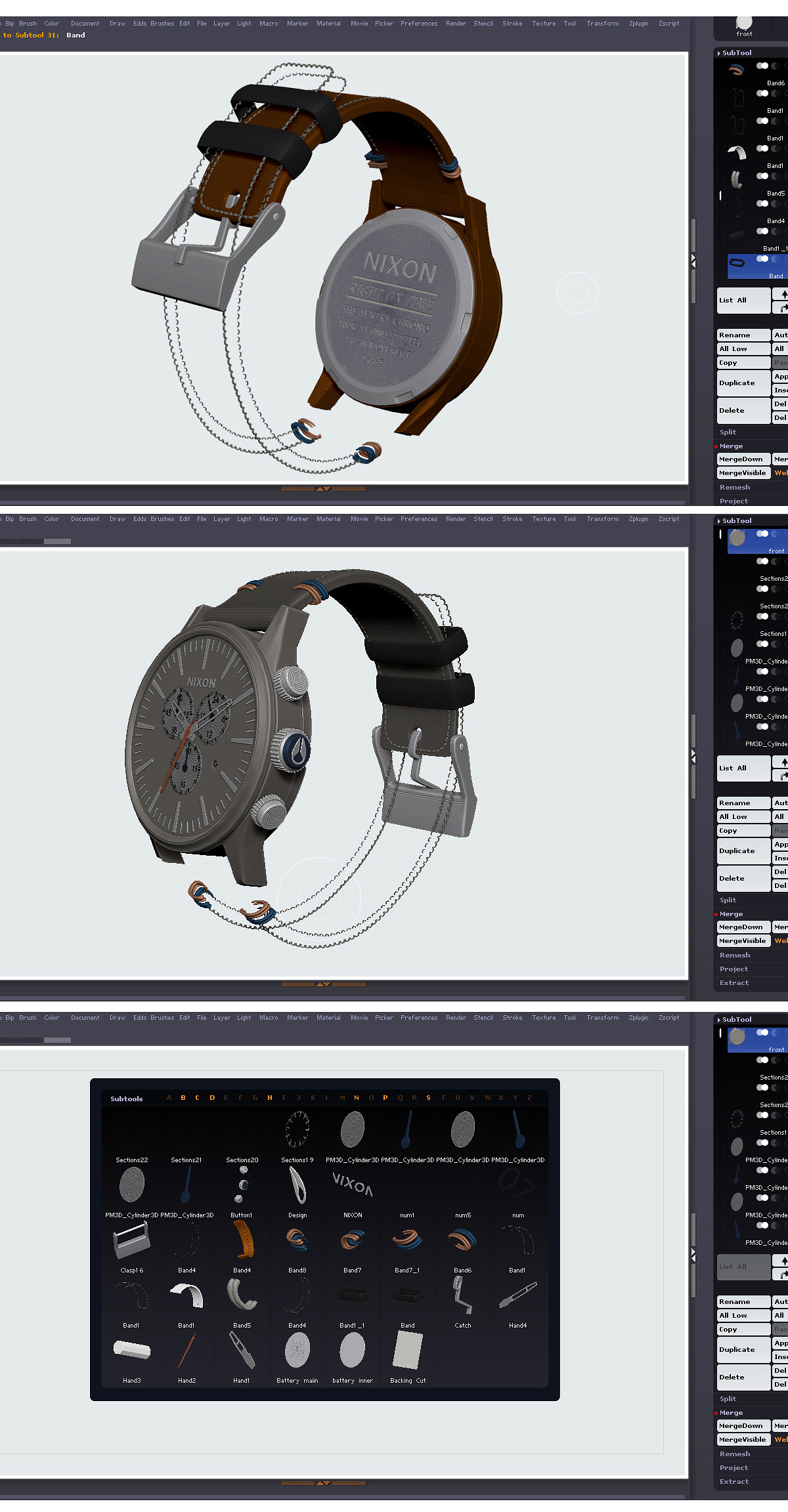

Looking at the polycount, the finished model of the watch for the lowest res version was about 400K - When working I always try to keep the subtools as low res as possible for easier adjustments at a later stage but with enough detail that I am happy with. For the high res details shown, this was created by subdividing the subtools into millions of polygons to apply alpha maps of scratches/dirtmaps, textures/painting etc to bring all the definitions out. The subtools may may have 6-7 levels of subdivisons and then the highest levels are used to create displacement maps and applied to the low resolution meshes - the high res maps do most of the work for the detailing and the mesh remains at a much lower polycount.

Sometimes I will use HD geometry but find it a little trickier to work with over the usual high/low divisions method. I generally try to add more definition to the more visible subtools but do enjoy detailing every little screw and tiny part when working!!

For the displacement maps used on the watch I exported the maps with quite basic settings - ‘map size’ 1024 or 2048, saved as 16bit tiffs with ‘flipV’ the ‘DPSubPix’ set to 0 and no ‘adaptive’ or ‘smoothUV’ enabled. I tend to send the finished lower res models into 3DS Max or Unreal Engine etc with fbx and then apply a smoothing/turbosmooth modifier on them to render and smooth any aliased geometry etc.

With a lot of my personal projects, I try to keep the geometry at a sensible level without worrying too much on polycounts etc, but if it starts running very slowly on my computer, I will definitely optimise or retopologise! If the scene has a lot of elements in it too I would definitely consider polycounts more especially for viewport and overall performances/growing file sizes!

Cheers!