Hi,

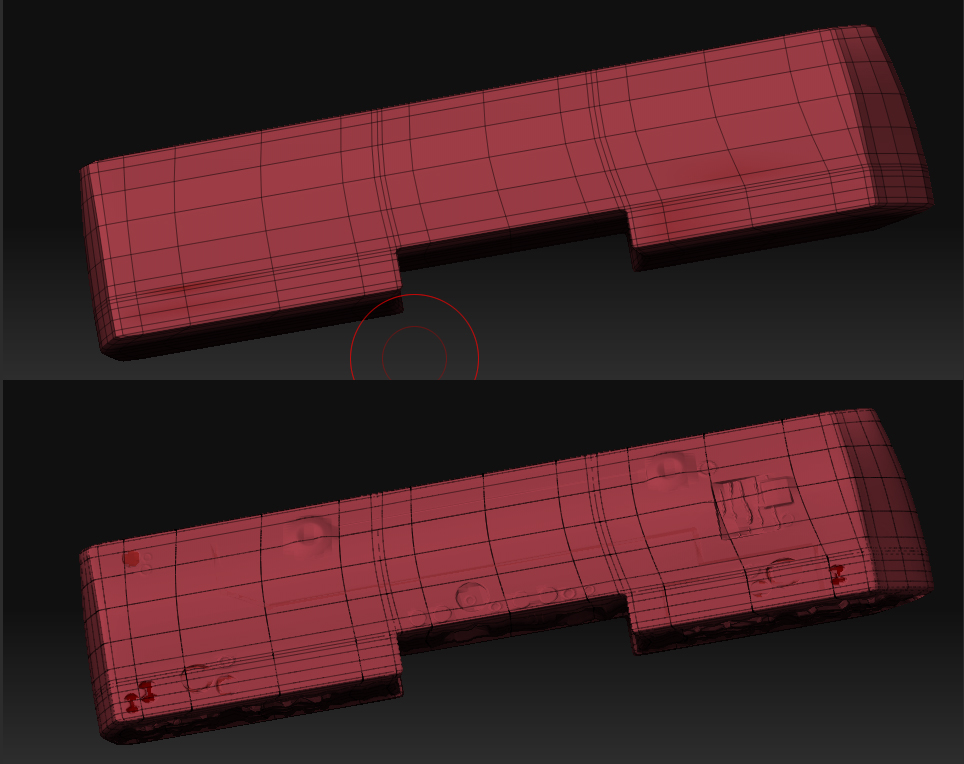

I’ve been trying to export a normal map (used ZMapper) and a Texture (Color to Texture in ZBrush) and I get a problem… I am using Maya as my rendering software.

The normal map goes through fine but the texture sits right in some areas but in other areas it’s slightly off…?

I’ve exported the Normal map after importing the original mesh to the first subdivision level.

I’m using “Maya_ObjectSpace_BestQuality.zmp” in the ZMapper configuration.

I’m guessing the texture could be slightly off because the original mesh is slightly bigger than what it becomes after subdividing it in ZBrush, but how can I fit the texture

Thank you !